In order to take advantage of minimal logging and parallel insert (into heaps or clustered columnstore indexes), you have probably seen that it is a prerequisite to write your insert statements like this:

INSERT INTO DestTable WITH( TABLOCK ) ( [Column list ... ] ) SELECT [...]

If you are moving a lot of data in batches across multiple sessions, you might expect it is OK to run statements like this concurrently with each other because execution of the statements will serialize; they all need a table-level exclusive (X) lock to run.

That is not the case. SQL Server will attempt to get an IX lock first, then convert that lock to a BU, or “bulk update” lock. This can cause problems when multiple statements targeting the same destination table run at the same time, even though the BU lock mode is compatible with itself.

If you are playing along at home, you can try this.

Create a target table:

DROP TABLE IF EXISTS dbo.BulkUpdateDestinationHeap; CREATE TABLE dbo.BulkUpdateDestinationHeap ( Id bigint ); GO

In one session, get a lock on the table and hold it. We do this simply so we can set up other sessions to insert into the table.

BEGIN TRANSACTION; SELECT Id FROM dbo.BulkUpdateDestinationHeap WITH ( TABLOCK, HOLDLOCK );

In two separate sessions, try to load some records into the table using the code pattern mentioned above:

INSERT INTO dbo.BulkUpdateDestinationHeap WITH ( TABLOCK )

( Id )

SELECT TOP 1000000

ROW_NUMBER() OVER ( ORDER BY ( SELECT NULL ) )

FROM master..spt_values t1

CROSS JOIN master..spt_values t2;

In a fourth session, you can see what the existing locks and lock requests look like by looking at the sys.dm_tran_locks DMV. Here’s a query that will just return the relevant columns:

SELECT Locks.request_mode,

Locks.resource_type,

Tables.name resource_name,

Locks.request_status,

Locks.request_session_id

FROM sys.dm_tran_locks Locks

INNER JOIN sys.tables Tables

ON Locks.resource_associated_entity_id = Tables.object_id

WHERE Locks.resource_type <> 'DATABASE' -- ignore DATABASE lock

ORDER BY Locks.request_session_id,

Locks.request_mode;

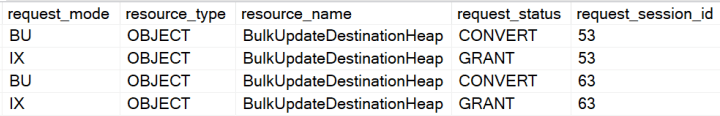

This is what I see:

Everything seems OK for now. Since IX locks are not compatitlble with S locks, both IX lock requests are waiting. However, since an IX lock is compatible with itself, both lock requests are granted as soon as the transaction in the first session commits or rolls back. When that happens, we see these lock requests:

If you wait a few seconds, eventually one of the sessions will be killed by the deadlock manager. This happens because each session needs to convert its IX lock to a BU lock to continue. BU locks are compatible with one another, but the BU lock mode is not compatible with IX. The BU lock request from session 53 is blocked by the currently held IX lock in session 63. Similarly, the BU lock request from session 63 is blocked by the currently held IX lock in session 53.

The XML deadlock report tells the same story in the resource list:

<resource-list>

<objectlock lockPartition="0"

objid="658101385"

subresource="FULL"

dbid="5"

objectname="LockDemos.dbo.BulkUpdateDestinationHeap"

id="lock26db1093b00"

mode="IX"

associatedObjectId="658101385">

<owner-list>

<owner id="process26db55648c8"

mode="IX" />

<owner id="process26db55648c8"

mode="BU"

requestType="convert" />

</owner-list>

<waiter-list>

<waiter id="process26dc4057468"

mode="BU"

requestType="convert" />

</waiter-list>

</objectlock>

<objectlock lockPartition="0"

objid="658101385"

subresource="FULL"

dbid="5"

objectname="LockDemos.dbo.BulkUpdateDestinationHeap"

id="lock26db1093b00"

mode="IX"

associatedObjectId="658101385">

<owner-list>

<owner id="process26dc4057468"

mode="IX" />

<owner id="process26dc4057468"

mode="BU"

requestType="convert" />

</owner-list>

<waiter-list>

<waiter id="process26db55648c8"

mode="BU"

requestType="convert" />

</waiter-list>

</objectlock>

</resource-list>

As for workarounds, depending on your workload you might consider using TABLOCKX instead of TABLOCK. When TABLOCKX is used, SQL Server requests and waits for an X lock on the object instead of an IX lock that it will convert to a BU lock. We don’t encounter the deadlock and both sessions can insert data into the target table. The sessions block each other and are forced to serialize, but there is no deadlock when we use TABLOCKX. You can run through the same demo above using TABLOCKX instead of TABLOCK to confirm.

We still get parallel insert when using TABLOCKX. This can be checked by looking at the query plan.

I wanted to check how many rows were logged to the transaction log to make sure I was still getting minimal logging. The function fn_dblog is undocumented but I have seen people use it for this purpose. I saw exactly the same number of rows logged whether I was using TABLOCK or TABLOCKX. I get 6280 rows for both queries (most times, it does not seem to be deterministic) when using the simple recovery model and 9191 rows when using the full recovery model. This is evidence to me that we still get minimal logging too. Here’s the code I used to test that:

/*

Compare how many rows are written to the transaction log

when using TABLOCK v. using TABLOCKX

*/

DECLARE @MaxLsn nvarchar(25);

-- truncate the table

TRUNCATE TABLE dbo.BulkUpdateDestinationHeap;

-- get max LSN

SELECT @MaxLsn = N'0x' + MAX( [Current LSN] )

FROM sys.fn_dblog( NULL, NULL );

-- use tablock to insert rows

INSERT INTO dbo.BulkUpdateDestinationHeap WITH ( TABLOCK )

( Id )

SELECT TOP 1000000

ROW_NUMBER() OVER ( ORDER BY ( SELECT NULL ))

FROM master..spt_values t1

CROSS JOIN master..spt_values t2;

-- find out how many rows that was

SELECT COUNT( * ),

'Using TABLOCK'

FROM fn_dblog( @MaxLsn, NULL );

-- truncate again

TRUNCATE TABLE dbo.BulkUpdateDestinationHeap;

-- get max LSN

SELECT @MaxLsn = N'0x' + MAX( [Current LSN] )

FROM sys.fn_dblog( NULL, NULL );

-- use tablockx to insert rows

INSERT INTO dbo.BulkUpdateDestinationHeap WITH ( TABLOCKX )

( Id )

SELECT TOP 1000000

ROW_NUMBER() OVER ( ORDER BY ( SELECT NULL ))

FROM master..spt_values t1

CROSS JOIN master..spt_values t2;

-- find out how many rows that was

SELECT COUNT( * ),

'Using TABLOCKX'

FROM fn_dblog( @MaxLsn, NULL );

The lesson here isn’t to use TABLOCKX instead of TABLOCK. The lesson is that when specifying a “table lock”, you aren’t guaranteeing the lock mode, but rather what resources you will take locks on.